The AI has quickly evolved to be not just an auxiliary device but a decisive factor in the industries of healthcare, finance, law enforcement, and national security. These are highly risky areas, and AI systems are not just streamlining processes but are impacting life-changing choices. The margin of error is very small in terms of diagnosing diseases, giving loans, and even forecasting the conduct of criminals. As a result, there is less acceptance of opaque, unverified, and arguably biased algorithms.

The high-risk application concept means that systems in which mistakes, bias, or failure might cause serious outcomes, such as monetary loss, legal penalties, or even harm to human life, exist. Since AI is becoming entangled in key infrastructures, there are no formalized oversight mechanisms that would mitigate systemic risk. It is here that AI auditing comes in as an invaluable protection.

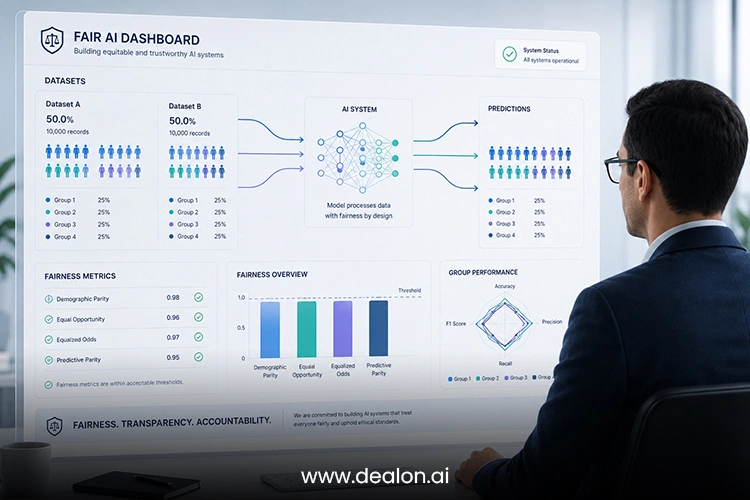

AI auditing is a broad assessment of algorithms, datasets, and how decisions are made to guarantee that they meet ethical, legal, and technical requirements. With the stakes continually rising, it is increasingly becoming clear that voluntary compliance will not do. Regulatory agencies, stakeholders, and the population are coming to one common demand: accountability should not be presumed, but provided.

Also Read: 6 Ethical Boundaries of Autonomous AI Systems You Must Understand

Table of Contents

Reason 1: Regulatory Pressure and the Global Push for AI Governance

Governments and other entities are working harder to regulate AI, specifically in high-risk areas. Policies are being created and enacted to make AI systems compliant with the standards of openness, equality, and responsibility. The AI Act of the European Union, as an example, categorizes AI systems according to the level of risk and sets strict conditions for the high-risk ones, such as requiring auditing and documentation.

This control wave does not exist in one region only. Nations of the world are realizing the need to have uniform systems of governance. When these frameworks are developed and become mature, AI auditing will be a mandatory practice rather than a best practice. Organizations that do not abide by the rules can be fined heavily, suffer a bad reputation, and be restricted in their operations.

In addition, compliance with regulations is not only a matter of avoiding punishment; it is a matter of trust. In times when people are becoming more skeptical of technology in general, a preference to stick to high-quality auditing standards may be a competitive edge. When firms are proactive about using AI in auditing, they will be in a better place to adjust to the transforming legal environment and remain credible with stakeholders.

Reason 2: Mitigating Bias and Ensuring Ethical Integrity

The use of bias in algorithms is one of the most urgent issues in the implementation of AI. These biases are usually based on distorted training data, incorrect assumptions, or historical inequalities incorporated into historical datasets. Such biases in high-stakes applications can produce discriminatory results, which worsen social inequalities and compromise ethics.

The identification and reduction of these biases are presented in a systematic way using AI auditing. By scrutinizing the data sources, model conduct, and the result patterns, the auditors will be in a position to identify anomalies and administer remedial action. This is required to make sure that the AI systems have a mechanism of operation that is equitable, inclusive, and in line with societal values.

Ethical integrity ceases to be a marginal issue; it is a key demand. Organizations are also being held responsible not only for what their AI systems accomplish but also for the manner in which such accomplishments are made. Clear auditing procedures can help organizations prove that they care about ethical practices, and this will assist the organization in gaining the trust of users and stakeholders.

Moreover, ethical violations in AI may have far-reaching implications, such as legal costs and social criticism. By implementing AI auditing, the organizations in question will be in a position to avoid all these risks, and their systems will become more useful to society rather than harmful.

Reason 3: Enhancing Transparency and Explainability

The nature of modern AI systems, especially the ones that rely on deep learning, can make them look like black boxes, and their decision-making procedures are hard to understand. This transparency is nonexistent in high-risk situations. Stakeholders such as regulators, users, and those affected require proper explanations concerning the way in which decisions are arrived at.

AI auditing should be done to enhance the degree of transparency and explainability. By deconstructing the models of AI in a systematic and documented manner, the auditors can describe how AI models work, and this explanation is more understandable and responsible. This is vital, especially in such fields as health care and finance, where the decisions taken must be justifiable and traceable.

Explainability is not a technical issue, yet it is a basic part of trust. People will be more willing to accept and trust the system when they know how and why a decision has been made. On the other hand, opaque systems create mistrust and opposition.

Organizations can address the issue of the divide between the complexity of the technical realm and the comprehension of the human realm by incorporating explainability into the auditing process. This not only provides an easy way to comply with the regulatory requirements but also increases the confidence and engagement of the user.

Reason 4: Preventing Catastrophic Failures and Systemic Risks

High-risk AI applications are used in settings where failures can be cascading. The failure of an AI-based medical diagnostic system, e.g., may result in misdiagnosis and unsuitable treatment. Likewise, financial algorithm mistakes would cause major economic shocks. These risks are increased in the interconnected modern systems, and hence, one of the priorities is prevention.

AI auditing is an important tool used in the detection of vulnerable areas before they are transformed into failures. Auditors identify the weaknesses through the strict testing of models under different circumstances and take preventive measures. It is a dynamic method that is needed to ensure the reliability and resilience of the systems.

AI auditing of systems also deals with systemic risks besides individual failures. The more interconnected AI systems are, the more disruption there is a possibility of. Auditing offers a structure for evaluating the overall effect of the implementation of AI, allowing organizations to predict and reduce the risks on a large scale.

High-risk applications have a cost of failure that is often vastly higher than the cost of prevention. It is not only a regulatory necessity to invest in strong auditing procedures but a strategic necessity as well. Those organizations that give risk management high priority will be in a better position to negotiate the complexities of an AI-driven environment.

Reason 5: Constructing Sustainable Trust in the AI-driven Future

A technological ecosystem relies on a sense of trust. It will make even the most advanced systems difficult to adopt without it. Trust in the context of AI is obtained based on transparency, accountability, and reliability, all of which are supported by auditing.

With the ever-increasing penetration of AI in many spheres of everyday life, users are increasingly critical and skeptical. The issue of privacy, prejudice, and its abuse is influencing the social consciousness. In such a setting, companies should not only act in accordance with the law but also show their desire to be socially responsible in their AI practices.

One of the physical means of creating and maintaining this trust is AI auditing. Organizations may demonstrate their willingness and dedication to ethical and trustworthy AI by submitting their systems to an external audit and by publishing audit findings. This transparency generates trust among its users, regulators, and investors.

Furthermore, trust cannot be a single variable; it has to be constantly acquired and strengthened. Frequent auditing also makes sure that AI systems keep up with the changing standards and expectations. It is an active approach that is required in keeping faith in an ever-evolving technology world.

Trust is a strategic asset that, in the long run, will ensure the success of those organizations that embrace it. The problem is that AI auditing is not only a compliance exercise but also a pillar of sustainable innovation.

Conclusion

The history of AI evolution is evident: as the systems are more powerful and widespread, the need to be accountable will require more pressure. AI auditing will become mandatory in high-risk applications where the impact of failure is severe. This change can be caused by regulatory pressures, ethical issues, the necessity to promote transparency, the necessity to manage risks, and the general imperative to develop trust.

By acknowledging this inevitability and being proactive, organizations will not only comply with it but will also be able to have a competitive advantage. By incorporating auditing into the lifecycle of AI development, they will be able to develop systems that are not only intelligent but also responsible and trustworthy.

AI does not only exist in its future due to technological progress, but rather the principles that govern the use of AI determine its future. AI auditing is a pivotal move toward a more responsible and ethical online environment–a world where the innovations and responsibility are balanced with each other.